Agentic ai pindrop anonybit is becoming important because modern identity fraud is moving faster than traditional security systems. Businesses now face deepfake voice attacks stolen credentials social engineering and contact center fraud that can bypass old authentication methods within seconds. The problem is that many companies still depend on passwords security questions manual call center checks and basic verification steps. These methods worked in the past but they are weak against advanced ai systems voice cloning and fraud attempts that look and sound real. When a caller can use leaked personal data and a deepfake voice together even trained teams can struggle to verify the real person.

What Is Agentic AI Pindrop Anonybit?

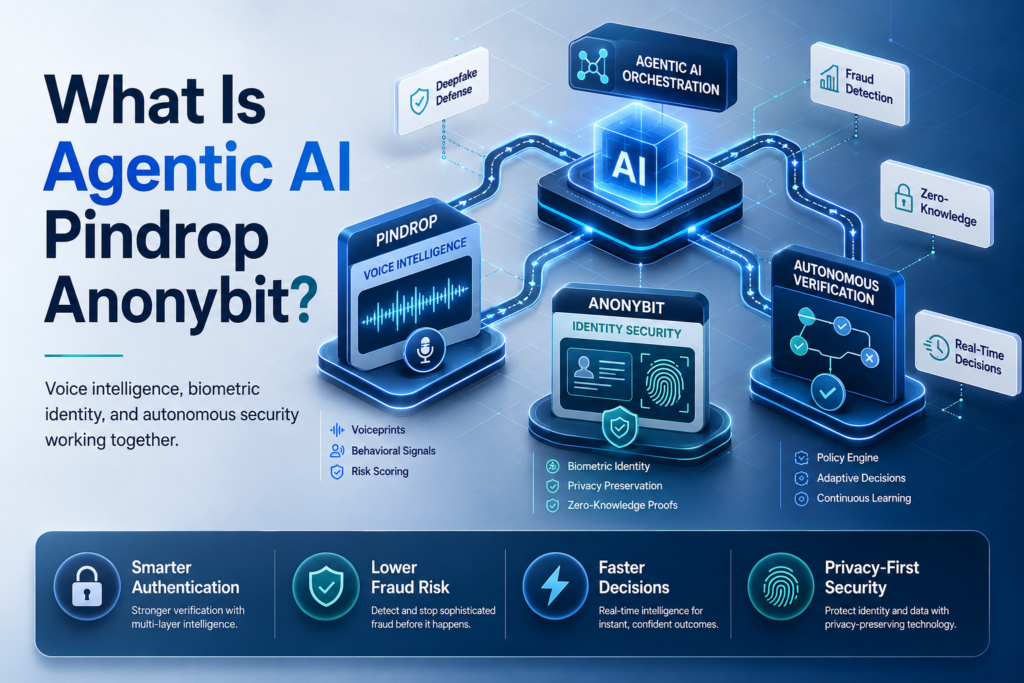

Security teams are facing a new problem. A voice can sound real a caller can answer old security questions and a digital credential can still be stolen. This is why agentic ai pindrop anonybit has become an important topic for businesses that need smarter identity protection.At a simple level agentic ai refers to AI systems that can reason act and respond with more independence than basic software. Pindrop is connected with voice security and fraud detection especially in phone based environments. Anonybit focuses on privacy preserving biometric protection and secure identity verification.

Why Agentic AI Matters in Modern Security

Agentic ai matters because attackers now move faster than old security tools. Traditional systems often wait for a fixed rule to trigger before they act. An agentic ai system can look at context behavior voice signals biometric data and risk patterns before recommending or taking action. This does not mean human security teams disappear. It means they get stronger support. An ai agent can monitor signals across multiple channels flag unusual activity and help teams respond before a small issue becomes a breach.

How Pindrop Supports Voice Security

Pindrop is often discussed in relation to voice fraud detection and voice authentication. Its role is important because many companies still use phone calls for customer support banking insurance and account recovery. In a contact center the caller may sound confident and may know personal details. That does not always prove the person is real. Pindrop’s technology helps analyze voice device behavior and risk signals so a business can verify a caller more safely. Pindrop’s 2025 Voice Intelligence and Security Report says contact centers lost an estimated $12.5 billion to fraud in 2024 with 2.6 million reported fraud events. This shows why stronger voice security is no longer optional for high risk industries.

How Anonybit Supports Identity Security

Anonybit supports identity security by focusing on privacy preserving biometric protection. The idea is not just to collect biometric data. The important part is how that data is protected stored and used for authentication. Anonybit is designed to reduce the risk of centralized biometric databases. Instead of placing all sensitive biometric data in one vulnerable location the anonybit framework supports a decentralized biometric approach. Anonybit describes its platform as enabling decentralized processing of biometric data through a privacy by design framework.

Simple Example of an Agentic AI System

Imagine a bank receives a call from someone requesting a wire transfer. The caller knows account details and sounds calm. The system checks voice signals device behavior previous call history authentication time and transaction risk. If the signals look normal the verification process can continue smoothly. If something looks suspicious the system can flag the call add extra authentication or route the case to a fraud specialist.

How Pindrop and Anonybit Work Together

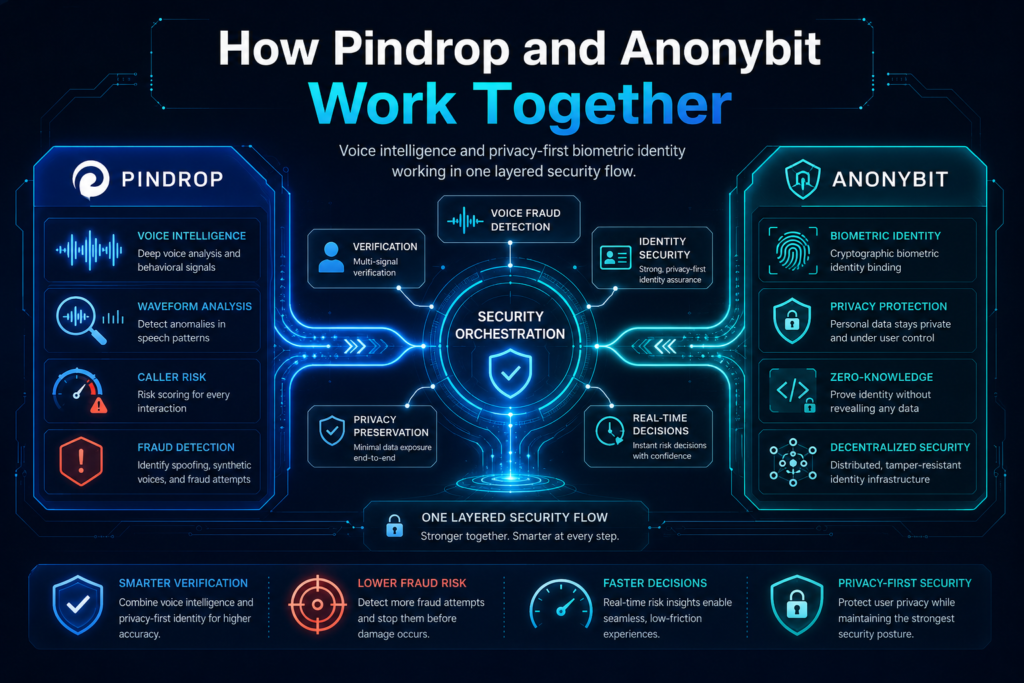

Many companies do not need one security tool. They need a layered system that can protect voice identity and biometric data at the same time. This is where the idea of how Pindrop and anonybit work together becomes useful for modern fraud prevention. The combination makes sense because Pindrop focuses on the voice and caller risk layer while Anonybit focuses on identity security and decentralized biometric protection. Agentic ai can connect these signals and support better decisions in real time.

The Agentic AI Pindrop Anonybit Framework

The agentic ai pindrop anonybit framework can be understood as a layered model. One layer detects suspicious voice activity. Another layer supports secure biometric authentication. A third layer uses ai to connect risk signals and guide the next step. This type of security framework is useful because fraud rarely appears in only one place. A criminal may use a stolen credential a deepfake voice and leaked personal data in one attack.

A layered system makes it harder for one weakness to open the full door. Pindrop and anonybit can support different parts of the identity journey. Pindrop can help identify unusual voice patterns. Anonybit can help protect biometric data. Agentic ai can help decide whether the risk is low medium or high.

How the Security System Uses AI

The security system uses ai to study signals that humans may miss. It can compare patterns, identify unusual behavior and support decisions faster than manual review. For example if a caller tries to pass voice authentication but the device pattern looks unusual the system can increase risk scoring. If the call includes signs of a deepfake voice the system can request stronger verification. The goal is not to block every customer.

Where Decentralized Biometric Protection Fits

Decentralized biometric protection fits into the identity layer. Anonybit uses a model that helps decentralize biometric protection so there is no single complete biometric template sitting in one place. This matters for breach prevention. If attackers target one database they should not be able to steal a complete usable biometric record. A decentralized biometric model can reduce the danger of storing sensitive identity information in one central location. Public patent information for decentralized biometric identification describes zero knowledge authentication where no individual node has complete knowledge of a biometric template.

Why This Framework Reduces Risk

This framework reduces risk because it does not trust one signal alone. A password can be stolen a voice can be cloned, and personal data can leak. When multiple layers work together attackers face stronger resistance. The mini solution is to treat identity as a layered process. Voice biometric data device behavior transaction risk and contextual signals should work together instead of separately.

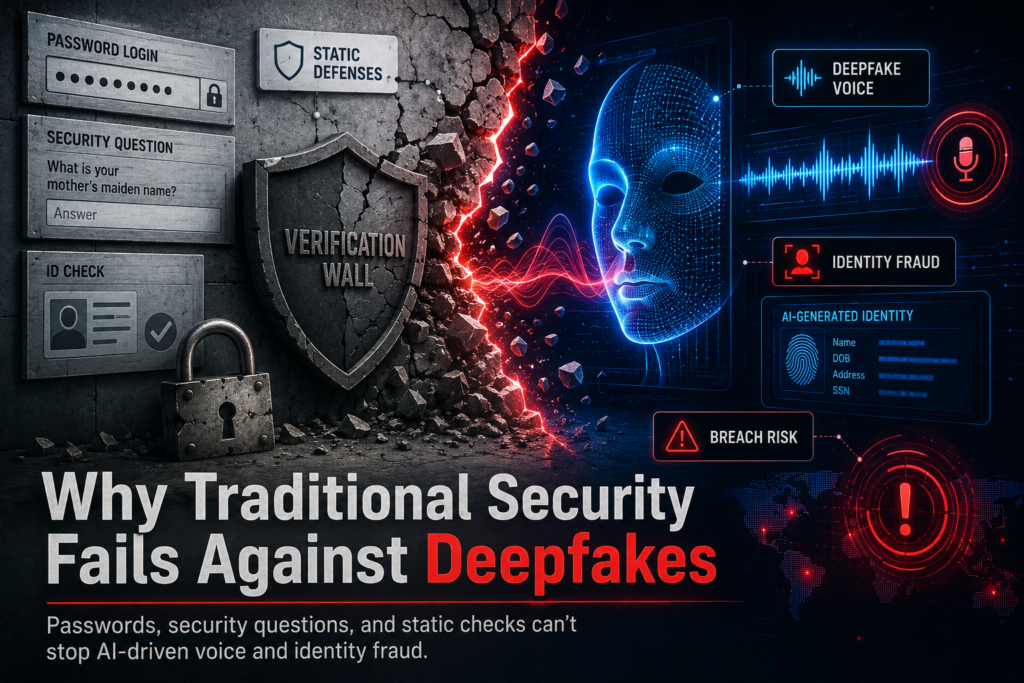

Why Traditional Security Fails Against Deepfakes

Many companies still rely on legacy security questions passwords and basic call center scripts. These methods were built for an older threat environment. They were not designed for deepfakes synthetic voices and fast moving social engineering. Traditional security fails because it often checks what a person knows instead of proving who the person is. If attackers have stolen personal data they can answer many questions correctly. That makes modern identity fraud harder to stop.

How Deepfake Voice Attacks Bypass Static Defenses

A deepfake voice can imitate a person closely enough to fool an untrained listener. In a call center an agent may hear a familiar tone and assume the caller is legitimate. Static defenses are weak against this because they cannot always identify synthetic speech or unusual audio patterns. Voice deepfakes can also be used during account takeover attempts payment changes executive impersonation and support desk attacks. The problem becomes worse when criminals combine synthetic audio with leaked personal information.

Why Passwords and Security Questions Are Weak

Passwords and security questions are weak because attackers can steal guess or buy them. A credential may be exposed in a previous breach. Security answers may be found through public records or social media. This is why old identity checks create false confidence. A caller who knows a birth date or address may still be a criminal. A stronger process must verify identity through multiple signals.

How Voice Deepfakes Increase Identity Fraud

Voice deepfakes increase identity fraud because they attack trust directly. Humans naturally trust what they hear especially when the voice sounds familiar or confident. Criminals can use a deepfake to pressure an agent request account access or approve a high value change. They may also use social engineering to create urgency.

Common Fraud Patterns Security Teams Should Flag

Security teams should flag sudden changes in voice behavior unusual call origins repeated failed attempts high value requests and pressure tactics. They should also watch for changes in device patterns and strange timing. The practical takeaway is clear. Legacy security cannot defend against modern fraud by itself. Businesses need stronger verification and better risk intelligence.

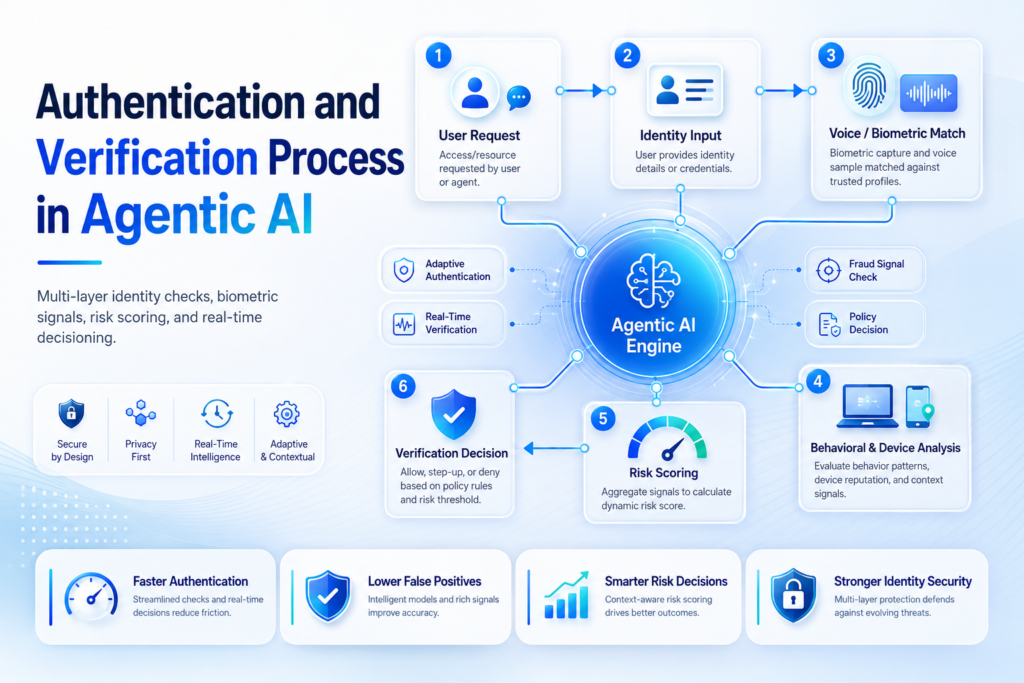

Authentication and Verification Process in Agentic AI

Many businesses worry that stronger authentication will make customers frustrated. That concern is real. A good system must improve security without making every honest user go through a slow process. Agentic ai can help by adjusting the verification process based on risk. Low risk users can move faster while risky activity gets extra checks. This creates a smarter balance between safety and convenience.

How Voice Authentication Helps Verify a Caller

Voice authentication helps verify a caller by checking voice characteristics and comparing them with known signals. It may work alongside device intelligence call metadata and behavior patterns. The system should not depend on voice alone. Voice authentication is stronger when it works with other risk indicators. If the voice seems normal but the device looks suspicious the system should still be careful. This layered approach helps reduce blind trust. It also helps agents serve real customers faster when risk is low.

How Biometric Data Supports Identity Verification

Biometric data supports identity verification by using human characteristics that are harder to steal than basic passwords. This may include voice face fingerprint or other biometric factors. Facial recognition and iris scans may also play a role in some identity flows. However businesses must protect biometric data carefully because it is highly sensitive. Security should include privacy controls limited data access and strong storage design. Anonybit stores biometric data in a way that supports decentralized protection rather than a single exposed repository.

How Real Time Detection Works Within Seconds

Real time detection works by checking signals as the interaction happens. The system can evaluate voice, behavior transaction context, and risk indicators within seconds. This is useful in a call center because decisions must happen quickly. If the system takes too long agents may become frustrated and customers may wait. If the system is too loose fraud attempts may pass.

How to Reduce False Positive Results

A false positive happens when the system treats a real customer as suspicious. Too many false positives can damage trust and increase support costs. To reduce false positive results businesses should test the system carefully before full deployment. They should monitor accuracy review edge cases and adjust thresholds based on real customer behavior. The mini solution is to make authentication adaptive. Do not apply the same friction to every customer. Match the level of verification to the level of risk.

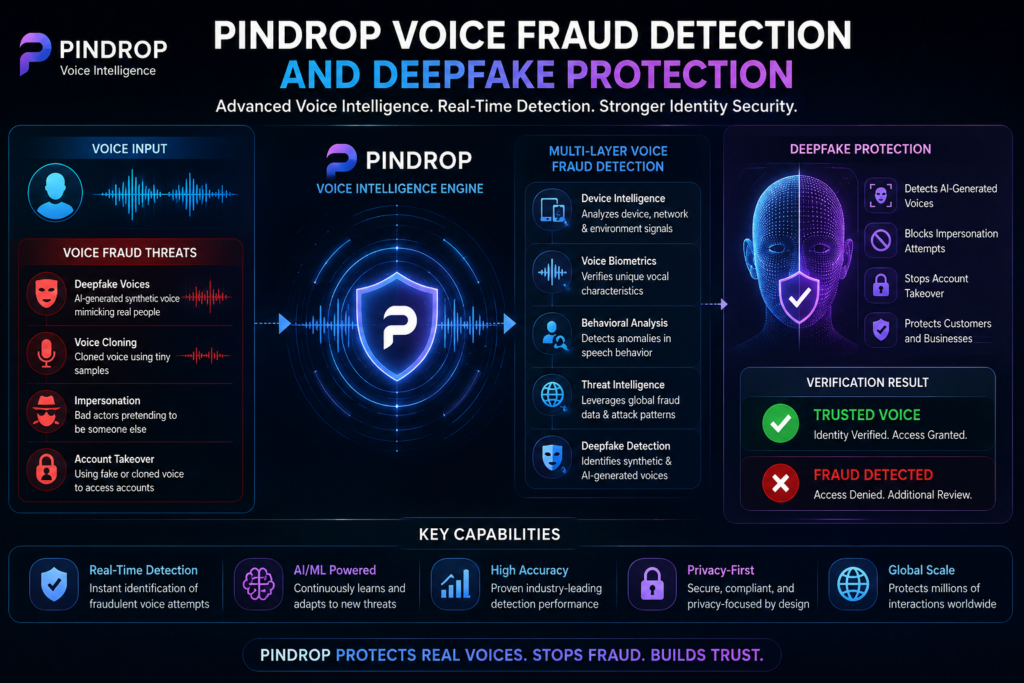

Pindrop Voice Fraud Detection and Deepfake Protection

Voice channels are still important for customer support banking, insurance healthcare and account recovery. That also makes them attractive targets for criminals. Pindrop is relevant because voice fraud is no longer limited to simple impersonation. Deepfake technology has made voice attacks more convincing. Attackers can sound professional calm and familiar. Businesses need fraud detection that understands both voice and context.

According to Pindrop’s 2025 Voice Intelligence

According to pindrop’s 2025 voice intelligence research AI driven fraud threats and deepfake trends are changing how companies should protect contact centers. Pindrop’s 2025 voice intelligence report points to growing synthetic voice risk and rising pressure on legacy defenses. Pindrop’s public report page also highlights $12.5 billion in 2024 contact center fraud losses and 2.6 million reported fraud events. These numbers show why phone based identity checks need stronger protection.

How Pindrop’s Technology Detects Fraud Attempts

Pindrop’s technology is built to help authenticate repeat callers catch risks in real time and detect AI generated threats in contact center environments. Its public product messaging highlights risk detection and authentication for healthcare and other organizations. Fraud attempts can include replayed audio synthetic speech stolen data or suspicious caller behavior. A system that watches only one signal may miss the attack. A stronger system studies several signals together.

Why Voice Prints Matter in Fraud Attacks

Voice prints matter because they can help compare a caller with expected voice patterns. However voice prints must be protected carefully because they are sensitive biometric identifiers. A voice fingerprint can add value when it is used as part of a layered model. It should not be the only security factor. Deepfake tools can make voice matching more complex so additional checks are important.

How Detection Can Help Reduce Fraud Losses

Better detection can reduce fraud losses by stopping risky interactions earlier. It can also lower operational pressure because agents do not have to rely only on instinct. The business outcome can be reduced fraud losses and lower operational workload. When fewer suspicious calls reach manual review and genuine customers are verified faster teams can protect accounts and serve users more efficiently.

Anonybit Framework to Decentralize Identity Security

Businesses want stronger biometric protection but they also fear storing sensitive biometric data in one place. That concern is valid. Anonybit helps address this challenge through a privacy preserving design. Anonybit’s approach focuses on decentralize identity security rather than creating another central database. This is important for enterprises that want authentication without increasing breach impact.

Why Anonybit Is Designed for Sensitive Biometric Data

Anonybit is designed for situations where sensitive biometric data must be protected without creating a single point of failure. This matters for banking insurance workforce access help desks, and customer verification. The company describes its platform as privacy preserving biometric authentication for enterprises insurance and other use cases. It also positions the platform as a way to secure workforce access help desk verification, and contact center verification.

How Anonybit Stores Biometric Data Differently

Anonybit stores biometric data differently by avoiding a single centralized biometric record. The system is built around decentralized processing which helps reduce exposure if one location is attacked. This approach can make a breach less damaging because attackers should not find a complete reusable biometric template in one place. It also supports privacy requirements because data protection is part of the design not an afterthought.

How Zero Knowledge Improves Security Solutions

Zero knowledge improves security solutions by allowing verification without exposing full sensitive information. In the context of biometric protection zero knowledge methods can help prove identity without giving one party complete access to the full biometric template. This is useful because authentication should not require unnecessary exposure. The system should verify the person while limiting what each component can see.

Why Biometric Databases Have Become Prime Targets

Biometric databases have become prime targets because attackers understand the value of permanent identity data. A stolen password can be reset. A stolen biometric pattern cannot be replaced in the same way. The mini solution is to reduce central exposure. Businesses should protect biometric data with decentralized storage privacy controls and careful access design from the beginning.

Use Cases for Contact Center and Call Center Security

Contact center fraud creates a direct business problem. Agents want to help customers quickly but fraudsters exploit that helpfulness. This is why use cases for voice biometric and agentic security are especially strong in phone based service environments. The main goal is not to make the customer journey difficult. The goal is to verify the right caller quickly and stop risky activity before damage happens.

High Value Transactions and Wire Transfer Protection

High value transactions need stronger authentication because one mistake can be expensive. A wire transfer request payment change account recovery or beneficiary update should not depend only on a caller answering old security questions. In this type of flow the system can verify voice signals biometric status device risk and transaction context. If the request is normal it can continue. If the request looks unusual extra verification can be added.

Financial Institutions and Fraud Detection Workflows

Financial institutions are strong candidates for agentic ai solutions because they face constant account takeover and payment fraud risk. Their fraud detection workflows must handle speed trust and compliance at the same time. A bank or credit union may use voice security in the contact center biometric verification for customer identity and ai systems for risk scoring. When these layers work together, fraud teams can prioritize the highest risk activity.

How AI Agent Support Can Improve Caller Verification

An ai agent can support caller verification by reviewing risk signals and suggesting the next step. It may recommend normal processing additional authentication or escalation to a fraud team. This improves consistency because agents do not have to make complex security decisions alone. It can also reduce authentication time for low risk customers.

Real-Life Contact Center Fraud Example

A caller contacts support and claims they need urgent access because their phone is broken. They know personal details and push the agent to bypass normal checks. The system detects unusual voice patterns and a mismatch in device history. Instead of approving the request the agent gets a warning and asks for stronger verification. The attempt is stopped before account access is changed. The practical takeaway is that call center security should combine human judgment with real time identity intelligence.

Deployment of Agentic AI Security Solutions

A strong security idea can fail if deployment is rushed. Businesses need to plan the rollout carefully test accuracy and make sure employees understand how the system works. Deployment should be treated as a security program not just a software installation. Agentic ai security works best when data workflows compliance and support teams are aligned. The goal is to build trust before scaling.

Planning Deployment Across Multiple Cloud Environments

Large enterprises often operate across multiple systems and across multiple cloud environments. That creates complexity because identity signals may come from different tools regions and departments. Planning deployment means deciding where the system connects what data it can access and how results are shown to agents or analysts.

Managing Data Access and GDPR Requirements

Data access must be limited to what the security process truly needs. Personal data and biometric data should not be visible to every tool or user. GDPR and other privacy rules require careful handling of identity information. Businesses should define retention rules consent flows access controls and audit logs before full rollout. A strong deployment includes privacy review legal review and security testing. This protects both the business and the customer.

How Enterprise Security Teams Should Test AI Services

Enterprise security teams should test ai services with real business scenarios. They should include normal callers risky callers edge cases noisy audio account recovery attempts and high risk transaction requests. Testing should measure accuracy false positive rate false negative risk authentication time and agent experience. A system that is accurate but too slow may create operational problems.

Deployment Checklist for Safer Authentication

A safer deployment should define the protected use case map the verification process test the model set escalation rules document privacy controls and train support teams. The mini solution is to start with one controlled area. After results are stable expand to more channels and more risk types.

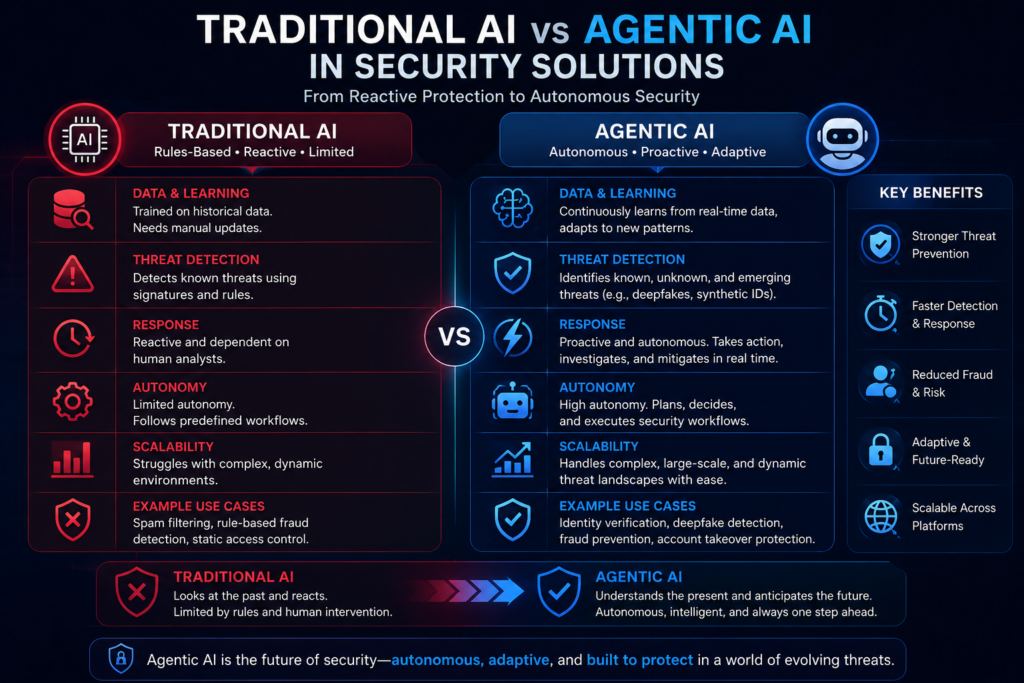

Traditional AI vs Agentic AI in Security Solutions

Many readers hear traditional ai and agentic ai and wonder what the difference really means. The difference is not only technical. It affects how quickly a business can detect decide and respond to fraud. Traditional ai often classifies or predicts. Agentic ai can support a more active process. It can interpret signals recommend action, and help coordinate the next step.

What Makes Agentic AI Different from Traditional AI

What makes agentic ai different is its ability to operate with more goal directed behavior. Traditional ai may score a call as risky. Agentic ai may use that score check other signals and suggest a verification path. This does not mean agentic ai should run without rules. Autonomous ai still needs guardrails testing and human oversight. The benefit comes from combining speed with control.

How Autonomous AI Responds to Fraud Attempts

Autonomous ai can respond to fraud attempts by adjusting the next step based on risk. A low risk caller may pass quickly. A suspicious caller may face more checks. A high risk request may be stopped or escalated. This response model is useful against modern attackers because fraud patterns change often. Static defenses cannot keep up with every new tactic.

Why Legacy Security Solutions Need Advanced AI

Legacy security solutions were built for simpler threats. They often depend on rules, passwords security questions and manual review. These methods struggle when attackers use deepfake tools stolen credentials and social engineering. Legacy security can still play a role but it needs stronger intelligence around it. Adding agentic ai security can help detect threats that fixed rules may miss.

Comparison Table: Legacy Security vs Agentic AI Security

| Security Feature | Legacy Security | Traditional AI | Agentic AI Security |

| Threat response | Slow and rule based | Pattern based scoring | Adaptive and context aware |

| Deep fake protection | Weak against synthetic voices | Better detection support | Stronger real time response |

| Identity verification | Passwords and security questions | Risk scoring and matching | Voice, biometric and contextual verification |

| Data protection | Often centralized records | Model based checks | Decentralized biometric and zero knowledge support |

| Contact center use | Agent driven decisions | Assisted fraud review | Guided authentication and escalation |

| Best fit | Basic access control | Fraud pattern analysis | High risk authentication and identity security |

Future of Agentic AI for Identity Security

Identity security is entering a new phase. Attackers are using AI so defenders need smarter systems too. The future of agentic ai will likely focus on adaptive verification privacy preserving biometrics and stronger fraud prevention across voice and digital channels. Businesses that wait too long may find that old systems no longer match the speed of modern fraud. The safest path is to improve identity protection before the next major attack.

The Advent of Agentic AI in Fraud Prevention

The advent of agentic ai in fraud prevention reflects a larger shift. Security is moving from static checks to intelligent systems that can understand context and act faster. This is important because fraud rings can use automation stolen data and synthetic media to attack at scale. If defenders rely only on manual review, they will fall behind.

How Generative AI Changes Social Engineering Risk

Generative ai changes social engineering because attackers can create more convincing messages voices and scripts. A scam no longer needs to be poorly written or obvious. A criminal can use a cloned voice a realistic story and stolen account details to pressure an employee. This makes training important but training alone is not enough.

How Agentic AI Solutions Can Protect Personal Data

Agentic ai solutions can protect personal data by identifying unusual access suspicious behavior and risky verification attempts. When connected with privacy preserving biometric systems they can also reduce reliance on weak secrets. The goal is not to collect more data than necessary. The goal is to use the right signals in a controlled way. Identity security should be strong private and practical.

Final Takeaway for Voice Verification and Security

Voice verification should no longer depend only on what a caller says or how natural the voice sounds. Modern systems need to analyze voice behavior biometric protection risk and context. The final takeaway is simple. Agentic ai Pindrop and Anonybit represent a layered way to think about safer identity security. When used carefully this approach can help businesses fight deepfake fraud reduce risk protect biometric data, and keep customer interactions smoother.

Conclusion

Agentic ai pindrop anonybit is important because identity fraud is becoming more advanced more realistic and harder to detect with old tools. Passwords security questions and basic call center checks are no longer enough when criminals can use deepfake voice tools stolen credentials and social engineering together. Pindrop adds value in the voice layer by helping organizations detect suspicious audio support voice authentication and improve fraud detection in contact center environments. Anonybit adds value in the identity layer by protecting biometric data through a decentralized biometric approach that reduces single point exposure. Agentic ai connects these signals and helps security teams make faster smarter decisions.

Table of Contents

ToggleWhat is agentic ai pindrop anonybit?

Agentic ai pindrop anonybit refers to a layered identity security concept that combines agentic ai Pindrop voice protection and Anonybit biometric security. It helps businesses improve authentication detect fraud attempts protect biometric data and respond faster to deepfake and voice fraud risks.

How do Pindrop and Anonybit work together?

Pindrop focuses on voice security caller risk and fraud detection while Anonybit focuses on decentralized biometric identity protection. Together they can support stronger verification by combining voice signals biometric data risk patterns and privacy focused authentication for contact center and enterprise security use cases.

Why is agentic ai important for identity security?

Agentic ai is important because modern fraud attacks move faster than traditional security systems. It can review multiple signals detect unusual behavior flag suspicious activity and guide the next authentication step. This helps businesses protect users without adding unnecessary friction for trusted customers.

Can agentic ai help detect deepfake voice fraud?

Yes agentic ai can support deepfake voice fraud detection by analyzing voice patterns caller behavior transaction context and risk signals in real time. It works best when combined with voice authentication fraud detection tools and layered verification instead of relying on one security factor.

Why is decentralized biometric security useful?

Decentralized biometric security is useful because it reduces the risk of storing complete biometric data in one central database. If attackers target one system, they should not easily access a full usable biometric record. This helps protect sensitive biometric data and supports stronger privacy controls.

What are the best use cases for contact centers?

The best use cases include caller verification account recovery wire transfer protection help desk authentication fraud detection and high value transaction review. Contact centers can use agentic ai Pindrop and Anonybit to reduce fraud attempts while keeping genuine customer interactions faster and smoother.

Is agentic ai better than traditional security?

Agentic ai can be stronger than traditional security because it responds with more context and flexibility. Traditional security often depends on passwords rules and static checks. Agentic ai can analyze risk verify identity detect deepfakes and recommend safer actions during the interaction.

How should a business start deployment?

A business should start with one high risk verification process such as call center authentication or high value transaction approval. The team should test accuracy false positive rates authentication time compliance needs and user experience before scaling deployment across multiple departments or cloud environments.